The tortoise and the hare of care: Health AI insights from Rock Health’s 2025 Consumer Adoption Survey

Since the dawn of large language models, AI adoption inside healthcare organizations has progressed relatively cautiously, with most taking a step-wise approach from pilots to broad deployments supported by governance and guardrails. But that’s only part of the story of AI in health.

Consumers are experimenting with AI much more freely, at their own pace, in their own contexts, and with far fewer structural constraints. If institutional AI adoption is the tortoise of our tale—risk-managed and mediated by oversight with staged implementation timelines—consumers look more like the hare, sprinting ahead and largely self-directed.

Over the past decade+, we’ve chronicled a rise in consumer adoption of digital health channels and tools: telehealth touchpoints during the pandemic normalized virtual care; wearables crossed into the mainstream; online search and health apps became regular channels for health information and management. AI chatbots are the latest chapter, introducing an always-on layer of personalized guidance that helps people make sense of (and act on) health information, whether they’re working through a new symptom or years of their own data. Consumers are already beginning to arrive at clinical encounters AI-informed, feeling a step ahead of a comparatively slow and steady system.

In this piece, we explore select data from Rock Health’s 11th Consumer Adoption of Digital Health Survey (hereto referred to as the “Survey”), fielded in December 2025. We asked a cohort of 8,000 U.S. Census-matched adults about their behaviors and attitudes toward virtual care and digital health tools.1 Amongst a dataset rich with findings, one narrative stood out—consumers are adopting AI to manage their health on their own terms. Ahead, your mile markers: what questions they’re asking, what they’re doing with the answers, where opportunities lie, and what it means that consumers have already left the starting line.

The rise of the AI chatbot

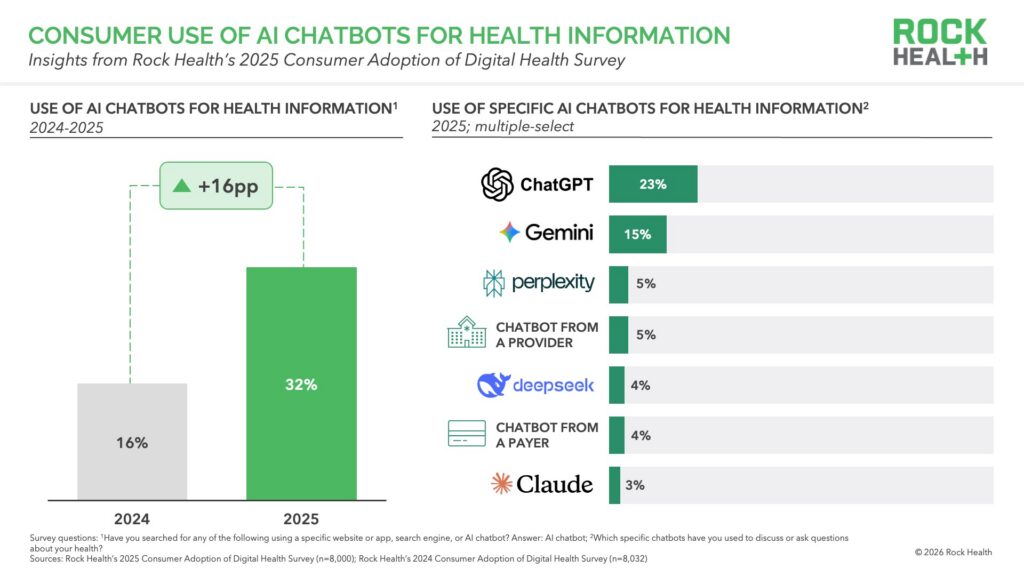

We won’t bury the lede: one in three (32%) respondents reported having ever turned to AI chatbots for health information2—double the share from just a year ago (16%). Other, more established digital channels for health information (e.g., online search, health websites) are still more pervasive than AI chatbots, and by comparison, grew in use by three percentage points in the past year. For many, AI has quickly become a routine part of how they manage their health. Sixty-four percent of AI users engage with it for health questions weekly or more often.

Notably, consumers are lacing up on their own. Nearly three-quarters of those who have used AI chatbots for health information (we’ll call them “AI users” as shorthand in this piece) reported turning to ChatGPT—23% of all respondents—in contrast to the 5% that used provider- or 4% that used payer-offered chatbots. General purpose tools became health-purpose tools by default: at the time of the Survey in December 2025, the LLM giants hadn’t yet introduced distinct healthcare experiences. Consumer demand for a tool that is low (or no) cost and available anytime was already pent-up and waiting at the blocks long before the purpose-built experiences arrived.

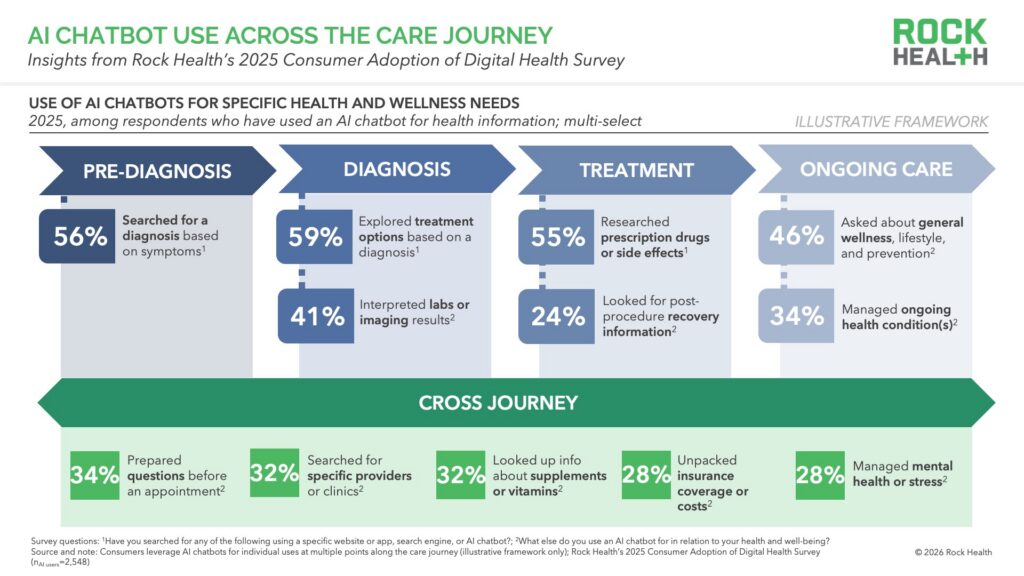

Researchers are actively stress-testing whether these tools are ready for what consumers are already asking of them—with recent benchmarking studies raising concerns about clinical accuracy, appropriate triage recommendations, safety for vulnerable users, and declining use of “I am not a doctor” disclaimers. But even with questions arising faster than resolutions, consumers are still using chatbots across the care journey. Among AI users, top queries include searching for treatment options based on a diagnosis (59%), a diagnosis based on symptoms (56%), and information about prescription drugs and/or side effects (55%). Even the lowest reported uses (e.g., insurance-related questions, searching for providers) were still relatively common, with almost a third of AI users engaging chatbots for these needs. Consumers aren’t drawing a line between clinical questions and administrative ones. Instead, AI is becoming a single engagement point for the entire healthcare experience.

“AI in healthcare is the greatest experiment in the history of medicine. We don’t know where this will go, but—because these tools can address so many healthcare problems and can do so better than anything we’ve had before—I feel moderately optimistic. The healthcare status quo isn’t working, and I can’t see how we get to better, safer, more accessible, more convenient, less biased, and less expensive care unless we use AI tools effectively.”

—Robert Wachter, MD, Professor and Chair, UCSF Department of Medicine; Author of “A Giant Leap“

Meeting the AI user

When we look more closely at who is using AI for health information, adoption reflects some of the same demographic trends we’ve tracked for years. The generational gradient is stark, with 45% of Gen Z adults and 48% of Millennials reporting use of an AI chatbot for health information, dropping to 25% among Gen Xers, 12% among Baby Boomers, and 7% among the Silent Generation.3 AI use is also higher among racial and ethnic minorities:4 41% reported AI use compared to 27% of white respondents, even when controlling for other demographic variables.5 Gender differences are more modest, with slightly higher use among men (34%) than women (30%). However, when we look at income and education, we don’t see meaningful differences in adoption across either characteristic. We’ll be watching to see how the next year of uptake unfolds. For earlier innovations, like virtual care and consumer wearables, who could afford them largely determined who got in first. With many AI chatbots free to access, that pattern may not hold in the same way.

Understanding the AI user

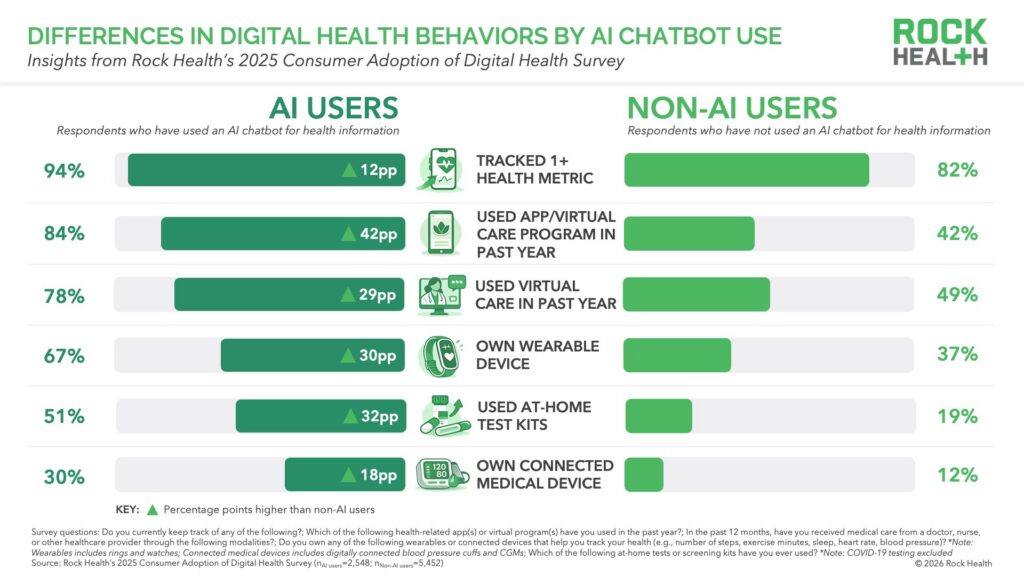

Demographics only tell part of the story. The AI user cohort looks a lot like “healthcare superusers”—consumers who use more care, generate more data, and track more of their health across both medical and wellness domains. AI users reported higher healthcare utilization across every care modality we asked about (virtual and in-person)6 compared to non-AI users, and they were more likely to report tracking at least one health metric. AI users’ tracking spikes around modifiable lifestyle indicators—sleep (43% AI users vs. 28% non-users), physical activity (41% AI users vs. 32% non-users), diet (40% AI users vs. 28% non-users), and stress (35% AI users vs. 22% non-users). In contrast, non-users tend to focus on more traditional health metrics like medications and blood pressure. It would be fair to assume that AI users’ greater reported healthcare utilization is due to higher need, but AI users look similar to non-AI users in both self-reported health status and chronic condition burden.

These consumers, call them “worried well” or call them proactive, have been building their own personal health tech stacks—AI is just the latest addition. AI users track more health metrics (four on average compared to three among non-users), and those data streams give AI tools more to work with. The insights are stronger. The value of tracking grows clearer. And as the interpretability of data improves, the stronger the motivation to generate more. Consumer health companies are now embedding proprietary models and new integrations into their products to fuel this virtuous cycle. For now, the consumers most likely to benefit from this feedback loop are the ones already generating the most data.

Permissive trust and selective sharing

AI users tend to cast a wider net when it comes to both where they get health information and who they are willing to share their health data with. While both AI and non-AI users largely trust health information from clinicians (85% vs. 88%), AI users reported higher rates of trust in health information from digital sources, including health apps (55% vs. 25%), social media (36% vs. 11%), and AI chatbots themselves (56% vs. 15%).7 Their greater willingness to trust non-traditional sources of information reflects the exploratory instinct of an early adopter.

The data sharing picture follows a similar pattern. Of all the entities we asked about in the Survey,8 AI users were most willing to share their health data with their healthcare provider—albeit less so than non-AI users (56% vs. 71%). On the other hand, AI users were more open than non-AI users to sharing health data with non-traditional healthcare stakeholders, like health tech companies (23% vs. 11%) and consumer tech companies (15% vs. 4%).9 These consumers aren’t abandoning traditional healthcare relationships so much as expanding who they let into their health information ecosystem—getting more value from more sources, on their own terms. It’s hard to argue with the appeal of AI’s personalization and always-on accessibility, though patient advocates warn its use can come at a cost outside the more structured protections of the formal healthcare system.

“These tools can create magical experiences—they’re very easy to access. But the second we leave the walls of the healthcare system, we’re trading off for a lot of other risks and lack of rights. There’s no duty of care, no duty of confidentiality, and often no clear path to accountability if something goes wrong.”

—Andrea Downing, Co-founder, The Light Collective; Leads the Patient AI Rights Initiative

The next step

What happens as a result of the AI query matters as much as the questions themselves. When we asked AI users about actions they’ve taken following their interactions with AI chatbots, they most commonly reported searching for more information online or through different sources (42%) as a next step, telling us AI is often augmenting, rather than replacing existing information-seeking behaviors. Nearly as many AI users reported moving toward formal care (40% consulted a provider)10 and taking direct action—trying a new health behavior (32%), or even adjusting medications (18%). Follow-on behavior is the norm: eight in ten (81%) of AI users report taking at least one relevant action following their interactions with AI chatbots. The path from question to next step is shortening, as AI turns what were once fragmented stages (search → interpretation → the decision to seek care or self-manage) into a more continuous flow.

The market is already starting to organize around this new evolution—owning the transition from insight to action. Healthcare organizations and tech platforms are racing to own the moment between AI query and clinical action by building on-ramps designed to make those transitions safer and more connected. Players like Amazon are rolling out embedded AI experiences, tightly connected to care linkages and escalation protocols that keep the consumer journey in-house, and adapting their strategies quickly for more reach. b.well launched bailey, a white-labeled conversational AI experience that routes users from AI-generated health questions directly into care navigation. Quest Diagnostics rolled out a Google-powered AI companion that surfaces longitudinal test insights and prompts follow-up conversations with providers. New launches are a response to the modern consumer who increasingly expects the ease and actionability of ChatGPT, Claude, or Gemini in every step of their care journey.

As providers increasingly encounter AI users who are more informed and more specific in their expectations of care, the clinician’s role may shift from the primary bearer of health information toward interpreter, validator, and contextualizer of information that patients have already explored. Less gatekeeper, more guide. For tech companies entering healthcare, building with clinical experts can help bridge the gap between rapidly evolving technology and the necessities of working in the healthcare system, ensuring tools are safe, practical, and effective for patients’ use—a mandate that will only grow as AI becomes more commonly integrated into care.

“Patients are not going to stop using these tools because they’re filling a real gap. And even though AI isn’t perfect, medical error isn’t zero either. Humans are imperfect. The real question is whether this represents an incremental step forward. We have an obligation to make the technology better, and to do so in a responsible, thoughtful way.”

—Kristen Valdes, Founder and CEO, b.well Connected Health

Rewriting a fabled future

The race between the tortoise and the hare in health AI isn’t complete and, in fact, isn’t about one winner at all. Those building for the hare (health AI consumers) will be best served taking a page from the tortoise’s playbook, carefully balancing speed with deliberation. The next chapter will reveal whether the system can keep these forces in balance—so that always-on availability does not outrun standards of care, and clinical rigor, privacy, and safety do not fall behind consumer momentum.

Dig deeper into the data behind this piece—including the emerging profile of the AI-enabled healthcare superuser, where consumer trust in AI breaks down even as usage climbs, and what it means for the organizations racing to meet them—with Rock Health Advisory. Reach out to learn more.

Get in touch with the venture team at Rock Health Capital.

Join us in building a more equitable future at RockHealth.org.

And last but not least, stay plugged into the Rock Health community and all things digital health with the Rock Weekly.

Footnotes

- Survey respondents (N=8,000) are Census-matched by gender, age, U.S. region, race/ethnicity, and annual household income. The Survey was administered from December 1, 2025 to December 23, 2025. Respondents used their personal desktop, laptop, smartphone, or tablet to complete the Survey in English.

- Respondents were considered to have used an AI chatbot for health information if they reported use of an AI chatbot for at least one of the following: diagnosis based on your symptoms; treatment options based on your diagnosis; information about prescription drugs and/or side effects; other health and wellness and information.

- In adjusted logistic regression models controlling for race, income, gender, education, insurance status, Gen Z and Millennial respondents exhibited odds of use more than seven times higher than older cohorts.

- Respondents were considered racial and ethnic minorities if they identified as American Indian or Alaska Native; Asian or Asian American; Black or African American; Hispanic or Latino/a/x; and/or Hawaiian Native or Pacific Islander.

- In adjusted logistic regression models controlling for age, income, gender, education, insurance status, and self-reported health, racial and ethnic minority respondents had approximately 33% higher odds of reporting AI use compared to white respondents.

- Virtual care modalities we asked about include: live video visits; live phone visits; text-based care. In-person care modalities include: hospital (non-emergency room); emergency room; urgent care; healthcare provider’s office.

- “Trust” includes “completely trust” or “somewhat trust” responses.

- Entities: “My healthcare provider,” “My family members,” “My pharmacy,” “My health insurance company,” “A research institution,” “A healthcare technology company,” “A pharmaceutical company,” “My employer,” “A consumer technology company,” ‘An AI company (e.g., ChatGPT developer),” “A government organization.”

- Health information was described in the survey as “your medical records, test results, prescription medication history, genetic information, and physical activity data.”

- “Provider” includes “doctor, nurse, or other healthcare professional.”